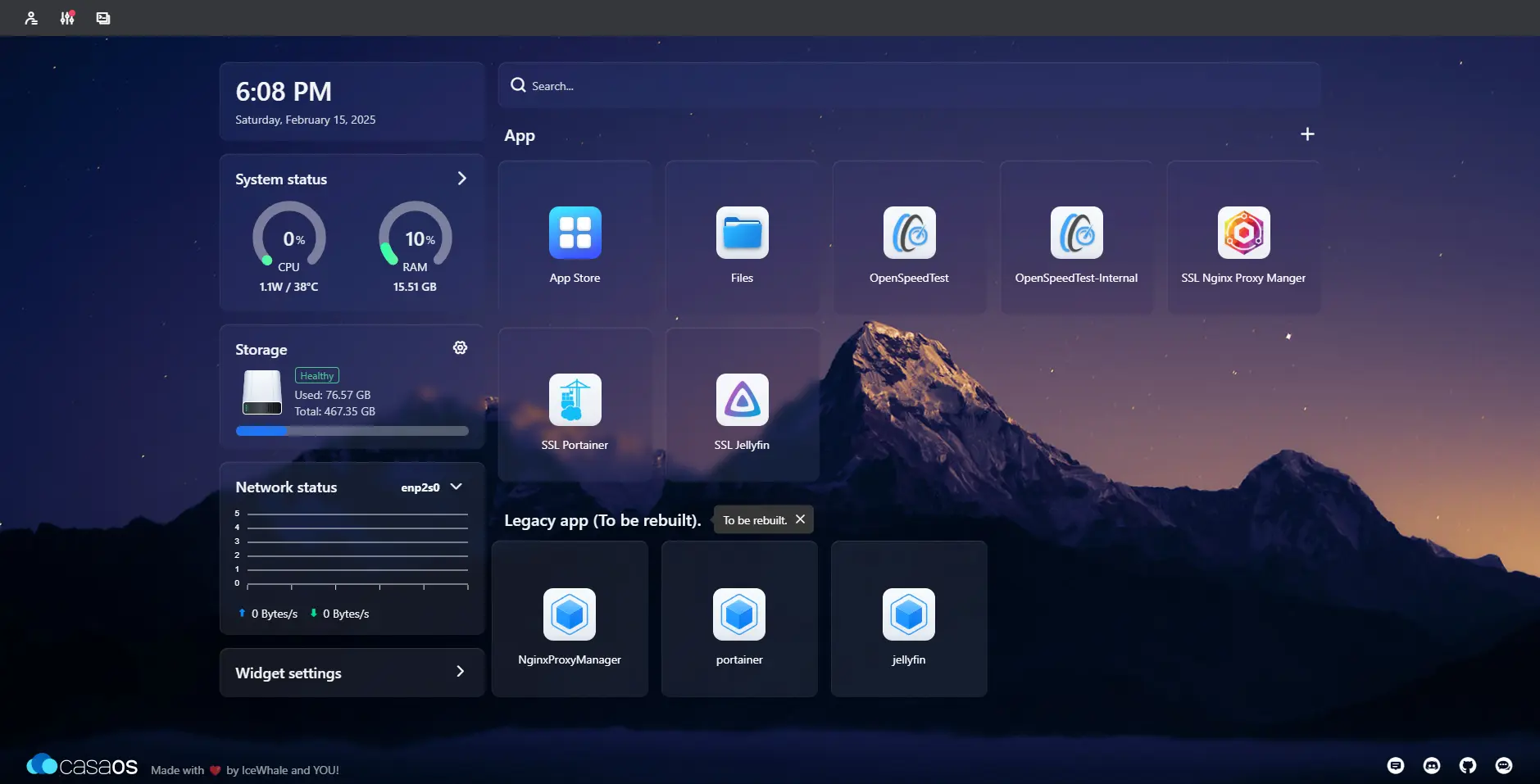

Mastering vLLM: Deploying Multi-Model Inference Stack on Consumer GPUs

Introduction In the world of MLOps, serving Large Language Models (LLMs) efficiently is just as important as training them. While tools like Ollama are fantastic for quick local setups, vLLM is the...